Citation

If this project is useful in your work, please cite the paper.

@inproceedings{wang2026diversed,

title={DIVERSED: Relaxed Speculative Decoding via Dynamic Ensemble Verification},

author={Wang, Ziyi and Kasa, Siva Rajesh and M S, Ankith and Kasa, Santhosh Kumar and Zou, Jiaru and Negi, Sumit and Zhang, Ruqi and Jiang, Nan and Song, Qifan},

booktitle={Proceedings of The 29th International Conference on Artificial Intelligence and Statistics},

year={2026}

}

Why this matters for the industry

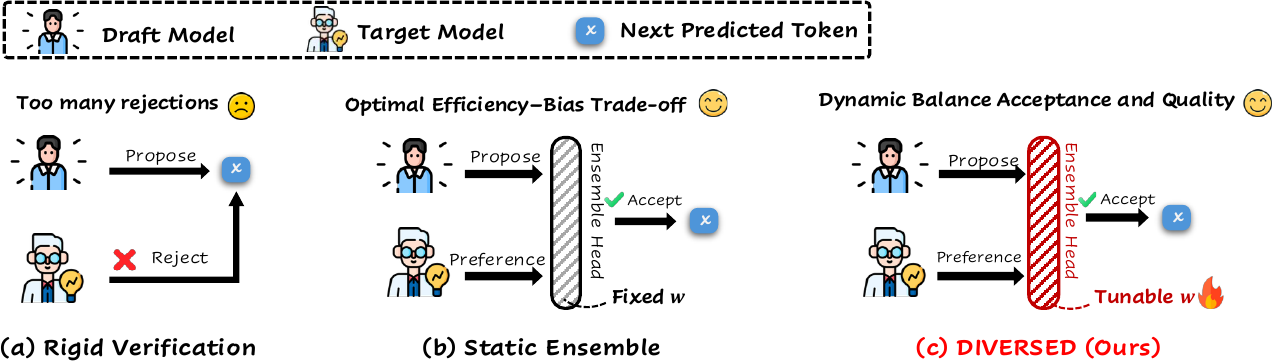

LLM inference is the single largest cost center for every model provider. Speculative decoding is already deployed in production at Google (AI Overviews) and is standard in open-source serving stacks like vLLM and SGLang. Yet every deployment today faces the same constraint: verification is all-or-nothing. You either match the target distribution exactly, or you don't.

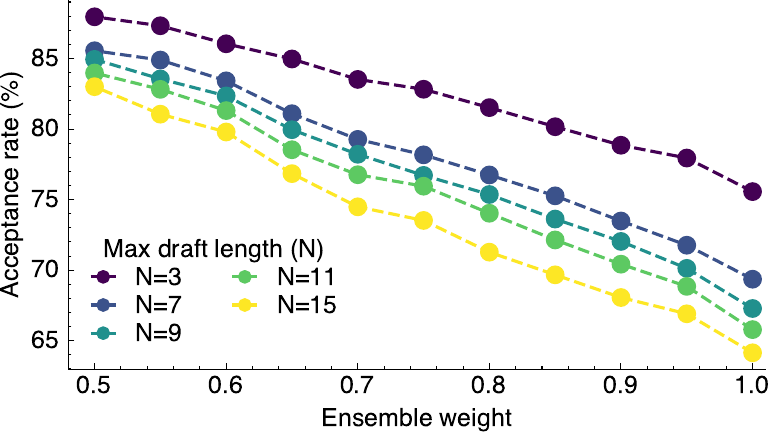

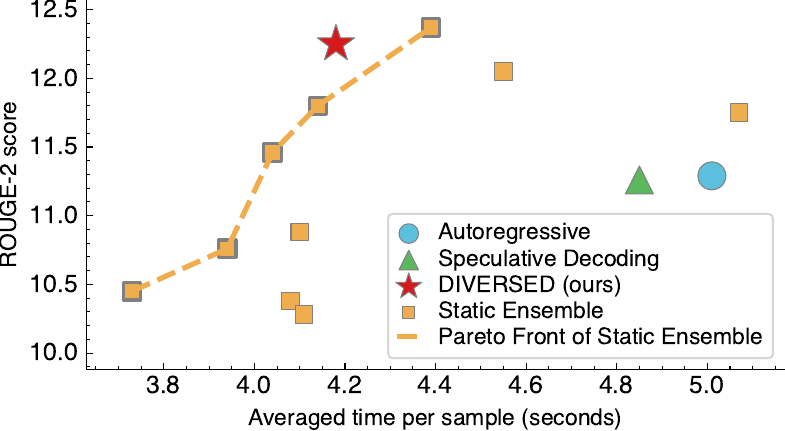

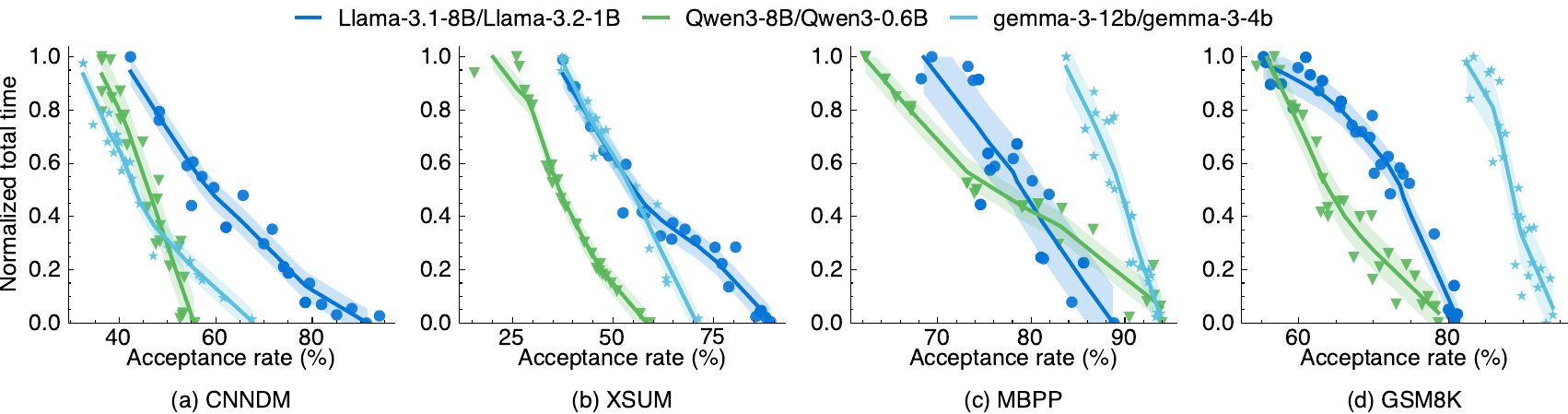

DIVERSED removes that binary. It gives providers a principled, learned mechanism to offer continuous quality-latency trade-offs from a single model pair. For API providers, this opens the door to usage-based pricing that tracks actual quality delivered per token, not just which model was called. For on-device inference, it means one deployment that adapts its fidelity to the task at hand.

The code is open-source and works with standard HuggingFace model pairs. The ensemble head adds negligible overhead. If you serve LLMs at scale, this is a drop-in upgrade to your speculative decoding pipeline.